How to cache responses with Redis in Node.js

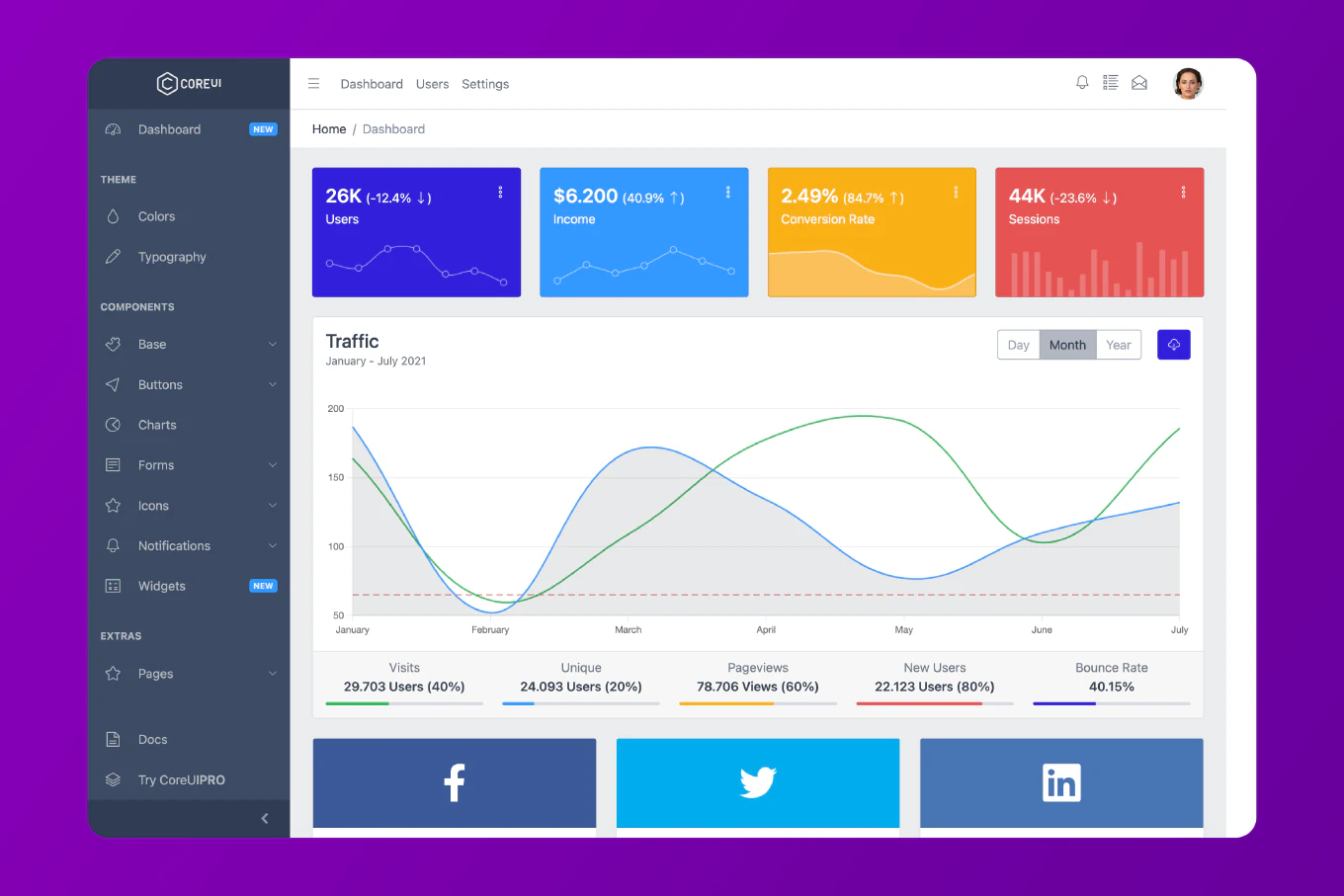

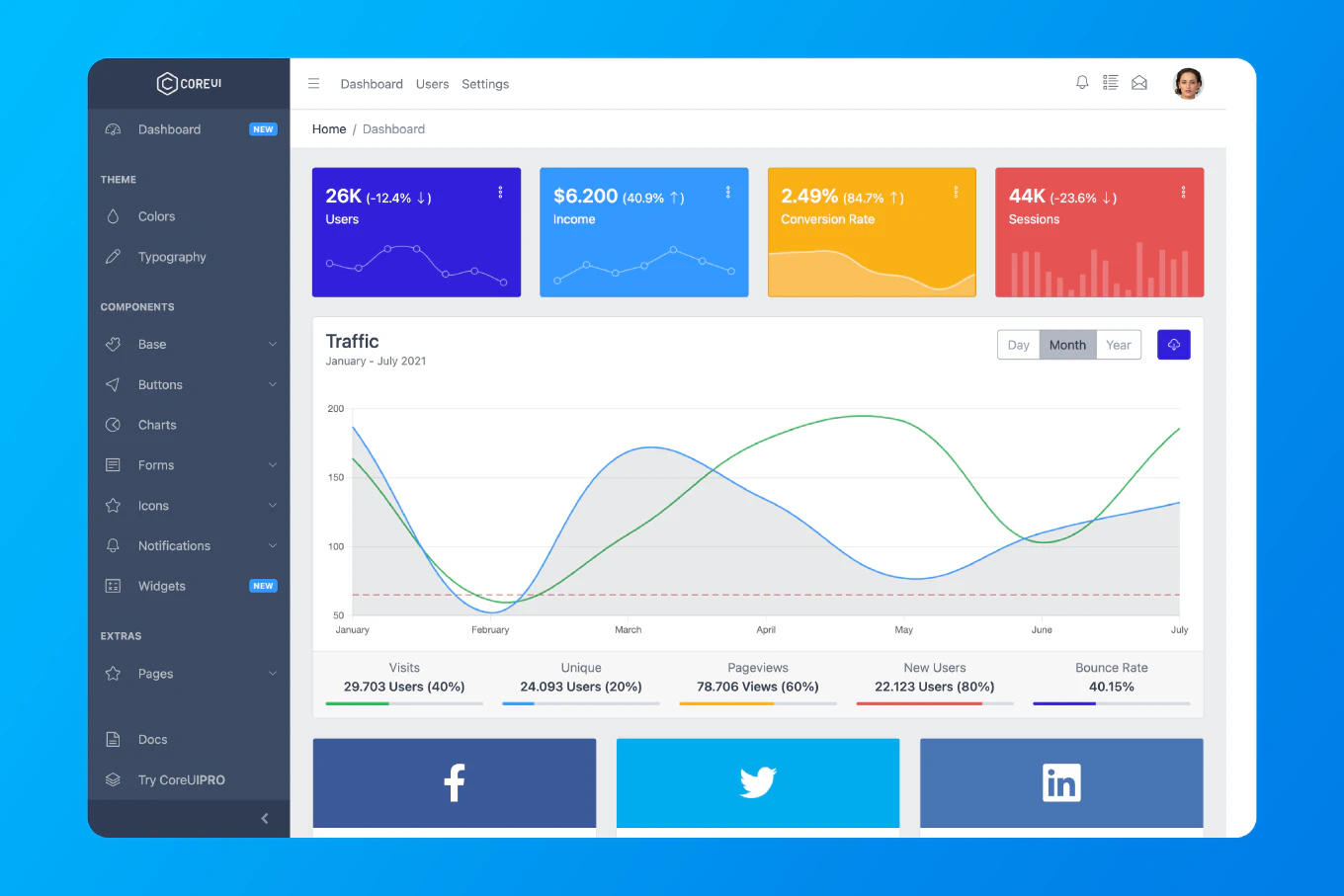

Caching API responses with Redis dramatically reduces database load and improves response times. As the creator of CoreUI with 12 years of Node.js backend experience, I’ve implemented Redis caching strategies that reduced API latency from 500ms to under 10ms for millions of requests daily.

The most effective approach combines cache-aside pattern with automatic cache invalidation and TTL management.

Install Redis Client

Install the Redis client:

npm install redis

Create Redis Client

Create src/config/redis.js:

const redis = require('redis')

const client = redis.createClient({

host: process.env.REDIS_HOST || 'localhost',

port: process.env.REDIS_PORT || 6379,

password: process.env.REDIS_PASSWORD

})

client.on('error', (err) => {

console.error('Redis error:', err)

})

client.on('connect', () => {

console.log('Connected to Redis')

})

client.connect()

module.exports = client

Cache Middleware

Create src/middleware/cache.js:

const redis = require('../config/redis')

const cacheMiddleware = (duration = 300) => {

return async (req, res, next) => {

if (req.method !== 'GET') {

return next()

}

const key = `cache:${req.originalUrl || req.url}`

try {

const cached = await redis.get(key)

if (cached) {

return res.json(JSON.parse(cached))

}

const originalJson = res.json.bind(res)

res.json = (data) => {

redis.setEx(key, duration, JSON.stringify(data))

return originalJson(data)

}

next()

} catch (error) {

console.error('Cache error:', error)

next()

}

}

}

module.exports = cacheMiddleware

Usage with Express

const express = require('express')

const cacheMiddleware = require('./middleware/cache')

const app = express()

app.get('/api/users', cacheMiddleware(600), async (req, res) => {

const users = await db.users.findAll()

res.json(users)

})

app.get('/api/posts/:id', cacheMiddleware(300), async (req, res) => {

const post = await db.posts.findById(req.params.id)

res.json(post)

})

Cache Service

Create src/services/cacheService.js:

const redis = require('../config/redis')

class CacheService {

async get(key) {

try {

const data = await redis.get(key)

return data ? JSON.parse(data) : null

} catch (error) {

console.error('Cache get error:', error)

return null

}

}

async set(key, value, ttl = 3600) {

try {

await redis.setEx(key, ttl, JSON.stringify(value))

} catch (error) {

console.error('Cache set error:', error)

}

}

async delete(key) {

try {

await redis.del(key)

} catch (error) {

console.error('Cache delete error:', error)

}

}

async invalidatePattern(pattern) {

try {

const keys = await redis.keys(pattern)

if (keys.length > 0) {

await redis.del(keys)

}

} catch (error) {

console.error('Cache invalidation error:', error)

}

}

async getOrSet(key, fetcher, ttl = 3600) {

const cached = await this.get(key)

if (cached) {

return cached

}

const data = await fetcher()

await this.set(key, data, ttl)

return data

}

}

module.exports = new CacheService()

Usage Example

const cacheService = require('./services/cacheService')

app.get('/api/users/:id', async (req, res) => {

const { id } = req.params

const user = await cacheService.getOrSet(

`user:${id}`,

() => db.users.findById(id),

3600

)

res.json(user)

})

Cache Invalidation

Invalidate cache on updates:

app.put('/api/users/:id', async (req, res) => {

const { id } = req.params

const updates = req.body

const user = await db.users.update(id, updates)

await cacheService.delete(`user:${id}`)

await cacheService.invalidatePattern('users:*')

res.json(user)

})

Cache with Hash

For related data:

class CacheService {

async hSet(key, field, value, ttl = 3600) {

try {

await redis.hSet(key, field, JSON.stringify(value))

await redis.expire(key, ttl)

} catch (error) {

console.error('Hash set error:', error)

}

}

async hGet(key, field) {

try {

const data = await redis.hGet(key, field)

return data ? JSON.parse(data) : null

} catch (error) {

console.error('Hash get error:', error)

return null

}

}

async hGetAll(key) {

try {

const data = await redis.hGetAll(key)

const parsed = {}

for (const [field, value] of Object.entries(data)) {

parsed[field] = JSON.parse(value)

}

return parsed

} catch (error) {

console.error('Hash get all error:', error)

return {}

}

}

}

app.get('/api/posts/:id/comments', async (req, res) => {

const { id } = req.params

const comments = await cacheService.hGet(`post:${id}`, 'comments')

if (comments) {

return res.json(comments)

}

const fresh = await db.comments.findByPostId(id)

await cacheService.hSet(`post:${id}`, 'comments', fresh, 1800)

res.json(fresh)

})

Cache Statistics

Track cache performance:

class CacheService {

constructor() {

this.stats = {

hits: 0,

misses: 0

}

}

async get(key) {

const data = await redis.get(key)

if (data) {

this.stats.hits++

return JSON.parse(data)

}

this.stats.misses++

return null

}

getHitRate() {

const total = this.stats.hits + this.stats.misses

return total > 0 ? (this.stats.hits / total * 100).toFixed(2) : 0

}

}

app.get('/api/cache/stats', (req, res) => {

res.json({

hits: cacheService.stats.hits,

misses: cacheService.stats.misses,

hitRate: `${cacheService.getHitRate()}%`

})

})

Best Practice Note

This is the same Redis caching architecture we use in CoreUI enterprise applications. The cache-aside pattern with automatic invalidation ensures data consistency while maximizing cache hit rates. Always set appropriate TTL values based on data volatility and monitor cache statistics to optimize performance.

Related Articles

For basic caching patterns, you might also want to learn how to implement caching in Node.js for in-memory caching strategies.