How to expose gRPC in Node.js

Exposing gRPC services in Node.js enables building high-performance microservices that communicate using protocol buffers.

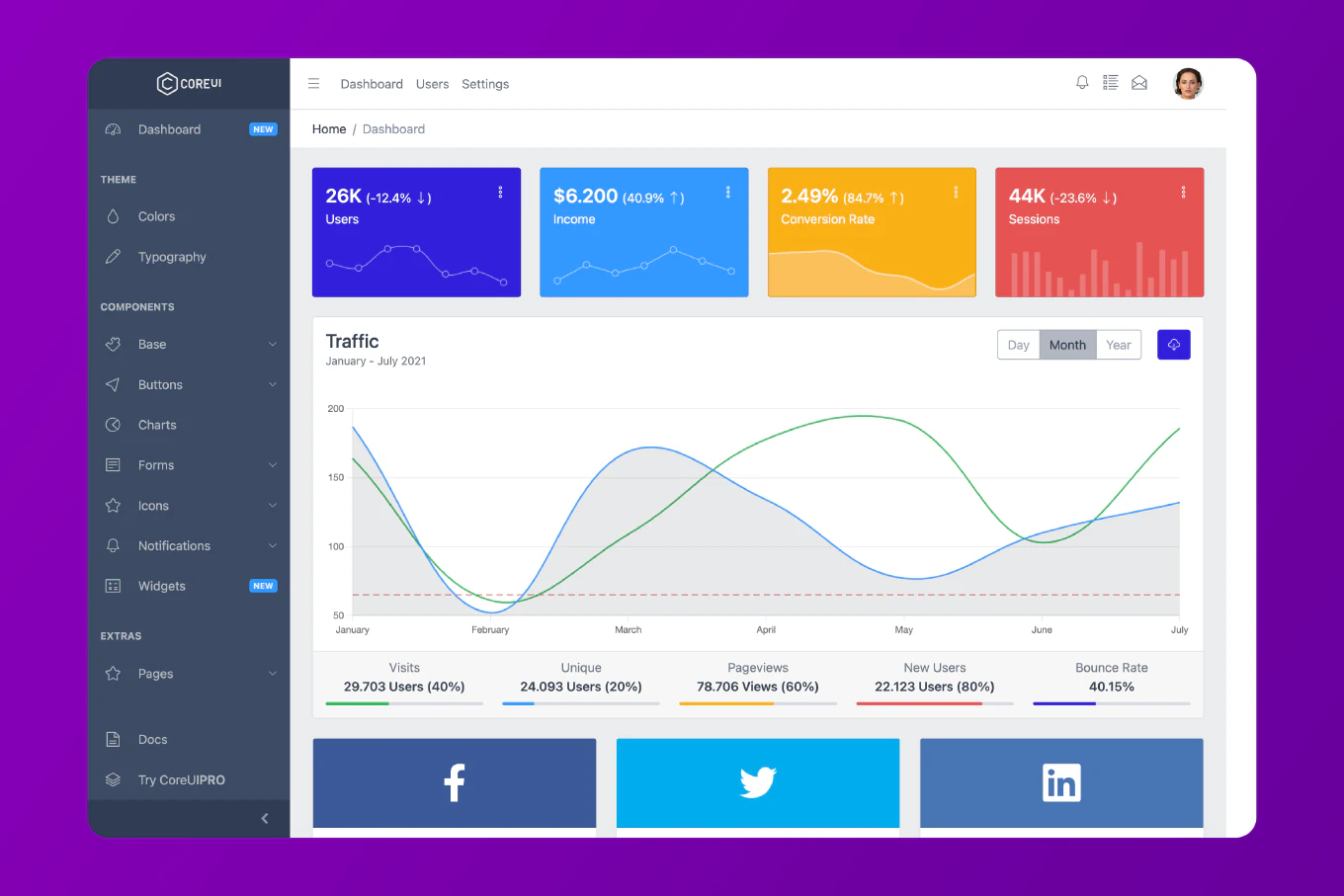

As the creator of CoreUI with over 10 years of Node.js experience since 2014, I’ve built gRPC servers for real-time data processing, inter-service communication, and high-throughput APIs.

The standard approach uses @grpc/grpc-js to create a server, define service implementations, and bind them to network ports.

This provides type-safe, efficient service endpoints that outperform traditional REST APIs for many use cases.

Install @grpc/grpc-js and @grpc/proto-loader to create gRPC servers.

npm install @grpc/grpc-js @grpc/proto-loader

These packages provide the core gRPC server functionality. The @grpc/grpc-js is the official pure JavaScript implementation. The @grpc/proto-loader loads .proto files and generates service definitions at runtime.

Defining a Proto File

Create a service contract in a .proto file.

// user.proto

syntax = "proto3";

package user;

service UserService {

rpc GetUser (UserRequest) returns (UserResponse);

rpc CreateUser (CreateUserRequest) returns (UserResponse);

rpc ListUsers (Empty) returns (stream UserResponse);

}

message UserRequest {

string id = 1;

}

message CreateUserRequest {

string name = 1;

string email = 2;

}

message UserResponse {

string id = 1;

string name = 2;

string email = 3;

}

message Empty {}

This proto file defines a UserService with three RPC methods. The GetUser and CreateUser are unary RPCs (single request, single response). The ListUsers is a server streaming RPC (single request, multiple responses). Protocol buffers provide strongly typed contracts.

Creating a gRPC Server

Build a server that implements the service methods.

const grpc = require('@grpc/grpc-js')

const protoLoader = require('@grpc/proto-loader')

const PROTO_PATH = './user.proto'

const packageDefinition = protoLoader.loadSync(PROTO_PATH, {

keepCase: true,

longs: String,

enums: String,

defaults: true,

oneofs: true

})

const userProto = grpc.loadPackageDefinition(packageDefinition).user

const users = [

{ id: '1', name: 'John Doe', email: '[email protected]' },

{ id: '2', name: 'Jane Smith', email: '[email protected]' }

]

function getUser(call, callback) {

const user = users.find(u => u.id === call.request.id)

if (user) {

callback(null, user)

} else {

callback({

code: grpc.status.NOT_FOUND,

details: 'User not found'

})

}

}

function createUser(call, callback) {

const newUser = {

id: String(users.length + 1),

name: call.request.name,

email: call.request.email

}

users.push(newUser)

callback(null, newUser)

}

function listUsers(call) {

users.forEach(user => {

call.write(user)

})

call.end()

}

const server = new grpc.Server()

server.addService(userProto.UserService.service, {

getUser,

createUser,

listUsers

})

server.bindAsync(

'0.0.0.0:50051',

grpc.ServerCredentials.createInsecure(),

(error, port) => {

if (error) {

console.error('Failed to bind server:', error)

return

}

console.log(`Server running on port ${port}`)

}

)

The getUser function handles unary requests with a callback. The createUser function modifies data and returns the result. The listUsers function streams multiple responses using call.write(). The server.addService() registers the implementation. The bindAsync() starts the server on port 50051.

Implementing Unary RPC Methods

Handle single request/response RPCs with validation.

function getUser(call, callback) {

const { id } = call.request

if (!id) {

return callback({

code: grpc.status.INVALID_ARGUMENT,

details: 'User ID is required'

})

}

const user = users.find(u => u.id === id)

if (!user) {

return callback({

code: grpc.status.NOT_FOUND,

details: `User with ID ${id} not found`

})

}

callback(null, user)

}

The function extracts the request data from call.request. Validation checks ensure required fields are present. Errors are returned with appropriate gRPC status codes. Success responses pass null as the first argument and data as the second. This follows Node.js error-first callback conventions.

Implementing Server Streaming

Stream multiple responses to a single request.

function listUsers(call) {

const { limit = 10, offset = 0 } = call.request

const paginatedUsers = users.slice(offset, offset + limit)

paginatedUsers.forEach((user, index) => {

setTimeout(() => {

call.write(user)

if (index === paginatedUsers.length - 1) {

call.end()

}

}, index * 100)

})

}

The call.write() method sends each user as a separate message. The call.end() signals the stream is complete. The timeout simulates async processing - in production, this might involve database queries or API calls. Server streaming is efficient for large datasets.

Implementing Client Streaming

Accept multiple requests and return a single response.

// Add to proto file

// rpc BatchCreateUsers (stream CreateUserRequest) returns (BatchCreateResponse);

function batchCreateUsers(call, callback) {

const createdUsers = []

call.on('data', (request) => {

const newUser = {

id: String(users.length + createdUsers.length + 1),

name: request.name,

email: request.email

}

createdUsers.push(newUser)

})

call.on('end', () => {

users.push(...createdUsers)

callback(null, {

count: createdUsers.length,

users: createdUsers

})

})

call.on('error', (error) => {

console.error('Stream error:', error)

})

}

The data event fires for each incoming request. The end event fires when the client finishes sending. The callback returns a summary of created users. This pattern is efficient for bulk operations.

Adding Middleware and Error Handling

Implement global error handling and logging.

function wrapHandler(handler) {

return async (call, callback) => {

try {

console.log(`[${new Date().toISOString()}] ${handler.name} called`)

if (handler.length === 1) {

// Streaming handler

return handler(call)

}

// Unary handler

await handler(call, callback)

} catch (error) {

console.error(`Error in ${handler.name}:`, error)

callback({

code: grpc.status.INTERNAL,

details: 'Internal server error'

})

}

}

}

server.addService(userProto.UserService.service, {

getUser: wrapHandler(getUser),

createUser: wrapHandler(createUser),

listUsers: wrapHandler(listUsers)

})

The wrapper function logs all requests and catches unhandled errors. It distinguishes between unary and streaming handlers by checking function arity. This provides centralized error handling and observability.

Using SSL/TLS for Production

Secure the gRPC server with SSL/TLS certificates.

const fs = require('fs')

const serverCert = fs.readFileSync('./certs/server-cert.pem')

const serverKey = fs.readFileSync('./certs/server-key.pem')

const caCert = fs.readFileSync('./certs/ca-cert.pem')

const serverCredentials = grpc.ServerCredentials.createSsl(

caCert,

[{

private_key: serverKey,

cert_chain: serverCert

}],

true

)

server.bindAsync(

'0.0.0.0:50051',

serverCredentials,

(error, port) => {

if (error) {

console.error('Failed to bind server:', error)

return

}

console.log(`Secure server running on port ${port}`)

}

)

The createSsl() method configures TLS with certificate files. The CA certificate validates clients. The server certificate and key authenticate the server. The true parameter requires client authentication. This ensures encrypted, authenticated communication.

Best Practice Note

This is the same gRPC server pattern we use in CoreUI backend services for building scalable microservice architectures. Always use SSL/TLS in production - never deploy with createInsecure() credentials. Implement proper error handling with meaningful gRPC status codes to help clients handle failures. Add request validation before processing to prevent invalid data. For high-load scenarios, consider implementing connection pooling and load balancing across multiple server instances. gRPC provides significant performance benefits over REST for service-to-service communication, especially when combined with HTTP/2 multiplexing and protocol buffer efficiency.