How to implement caching in Node.js

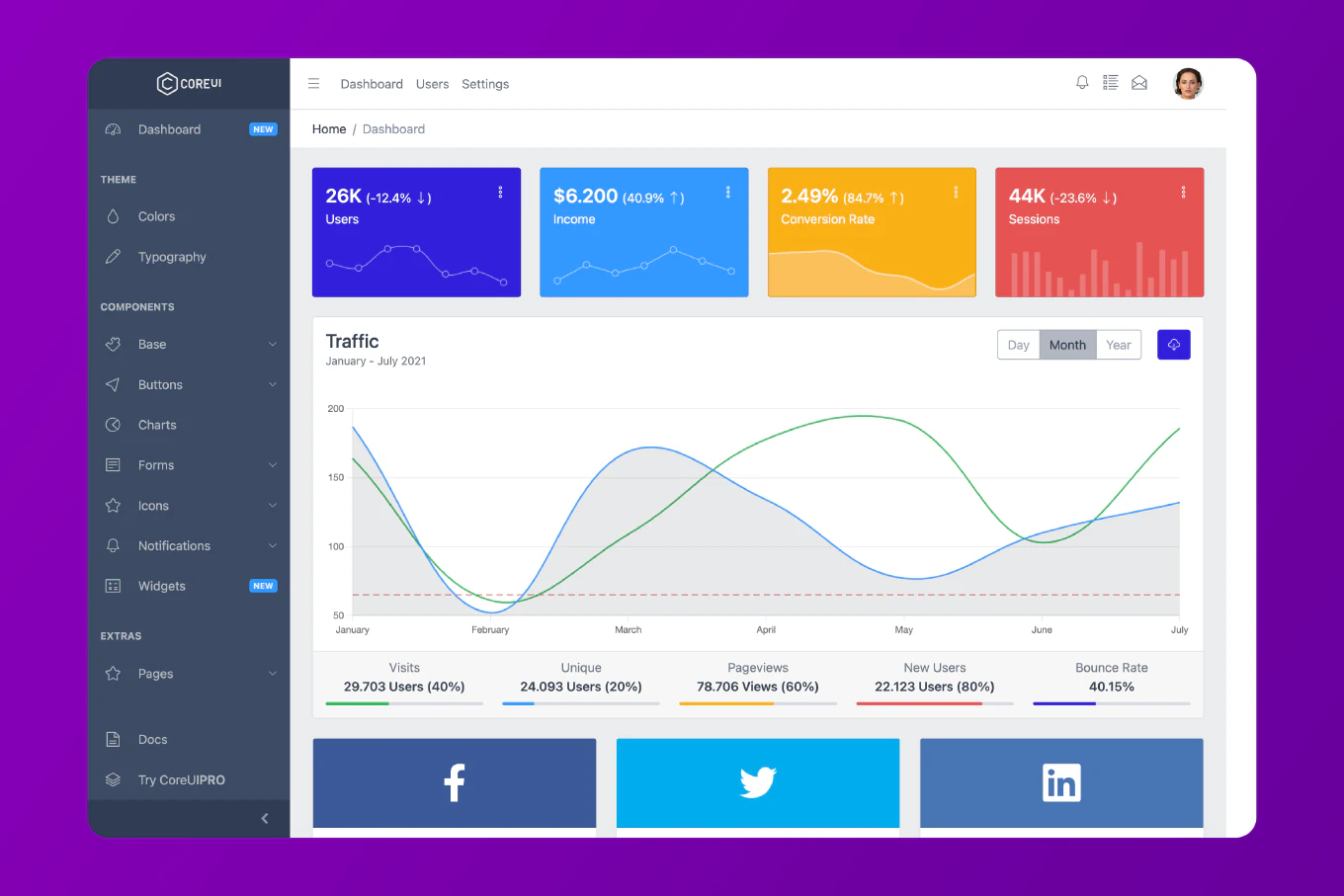

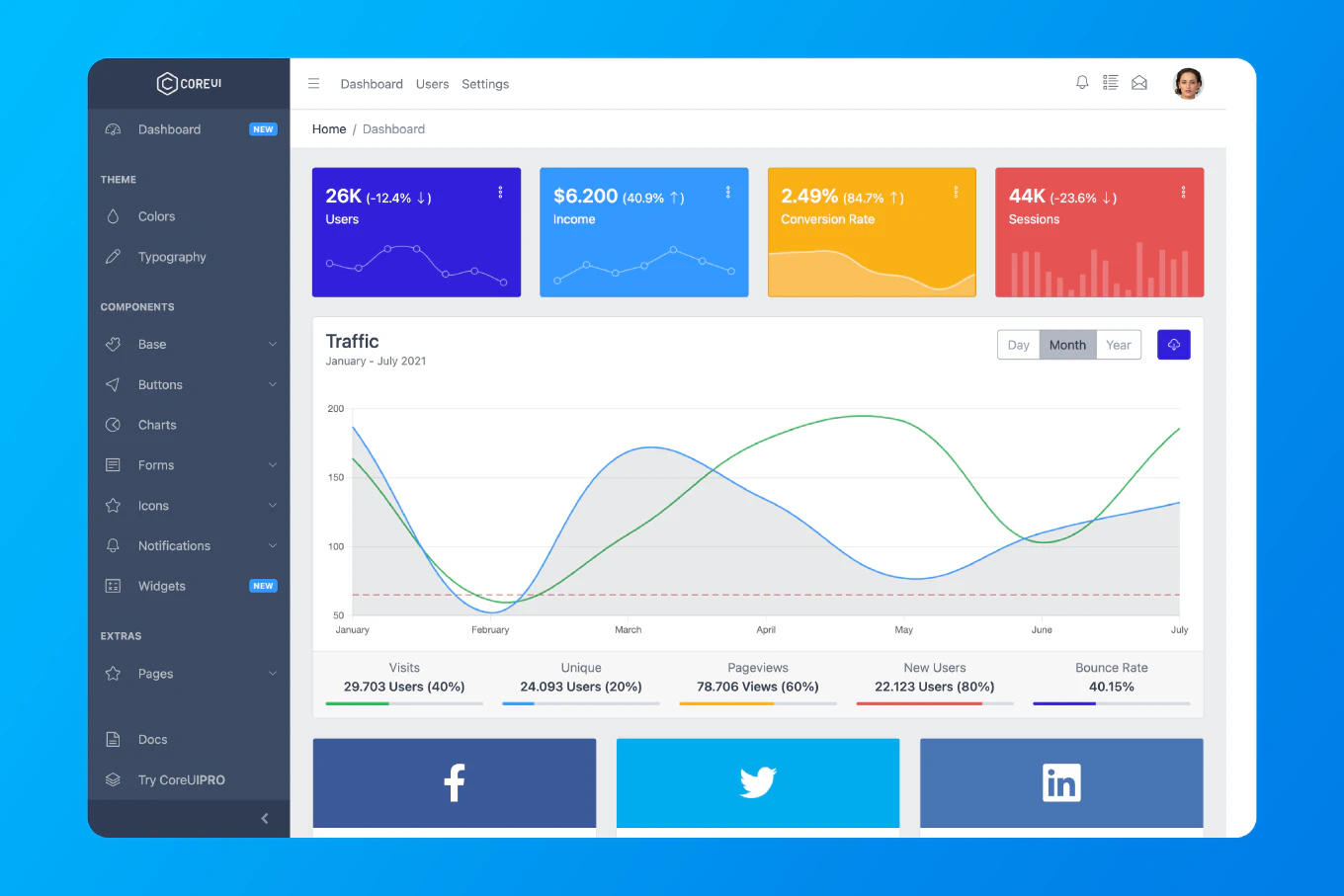

Caching dramatically improves Node.js application performance by storing frequently accessed data in memory. As the creator of CoreUI with 12 years of Node.js backend experience, I’ve implemented caching strategies that reduced API response times from seconds to milliseconds for millions of users.

The most effective approach combines in-memory caching for small datasets with Redis for distributed caching in production environments.

Simple Memory Cache

Create src/cache/memoryCache.js:

class MemoryCache {

constructor() {

this.cache = new Map()

this.timers = new Map()

}

set(key, value, ttl = 3600) {

this.cache.set(key, value)

if (this.timers.has(key)) {

clearTimeout(this.timers.get(key))

}

const timer = setTimeout(() => {

this.delete(key)

}, ttl * 1000)

this.timers.set(key, timer)

}

get(key) {

return this.cache.get(key)

}

has(key) {

return this.cache.has(key)

}

delete(key) {

this.cache.delete(key)

if (this.timers.has(key)) {

clearTimeout(this.timers.get(key))

this.timers.delete(key)

}

}

clear() {

this.cache.clear()

this.timers.forEach(timer => clearTimeout(timer))

this.timers.clear()

}

}

module.exports = new MemoryCache()

Usage with Express

const express = require('express')

const cache = require('./cache/memoryCache')

const app = express()

app.get('/api/users/:id', async (req, res) => {

const { id } = req.params

const cacheKey = `user:${id}`

if (cache.has(cacheKey)) {

return res.json(cache.get(cacheKey))

}

const user = await db.users.findById(id)

cache.set(cacheKey, user, 300)

res.json(user)

})

Cache Middleware

Create reusable caching middleware:

const cacheMiddleware = (duration = 300) => {

return (req, res, next) => {

const key = `cache:${req.originalUrl || req.url}`

if (cache.has(key)) {

return res.json(cache.get(key))

}

const originalJson = res.json.bind(res)

res.json = (data) => {

cache.set(key, data, duration)

return originalJson(data)

}

next()

}

}

app.get('/api/posts', cacheMiddleware(600), async (req, res) => {

const posts = await db.posts.findAll()

res.json(posts)

})

Redis Cache

Install Redis client:

npm install redis

Create src/cache/redisCache.js:

const redis = require('redis')

class RedisCache {

constructor() {

this.client = redis.createClient({

host: process.env.REDIS_HOST || 'localhost',

port: process.env.REDIS_PORT || 6379

})

this.client.on('error', (err) => {

console.error('Redis error:', err)

})

this.client.connect()

}

async set(key, value, ttl = 3600) {

try {

await this.client.setEx(key, ttl, JSON.stringify(value))

} catch (error) {

console.error('Cache set error:', error)

}

}

async get(key) {

try {

const data = await this.client.get(key)

return data ? JSON.parse(data) : null

} catch (error) {

console.error('Cache get error:', error)

return null

}

}

async delete(key) {

try {

await this.client.del(key)

} catch (error) {

console.error('Cache delete error:', error)

}

}

async clear() {

try {

await this.client.flushDb()

} catch (error) {

console.error('Cache clear error:', error)

}

}

async invalidatePattern(pattern) {

try {

const keys = await this.client.keys(pattern)

if (keys.length > 0) {

await this.client.del(keys)

}

} catch (error) {

console.error('Cache invalidation error:', error)

}

}

}

module.exports = new RedisCache()

Cache-Aside Pattern

const cache = require('./cache/redisCache')

async function getUser(id) {

const cacheKey = `user:${id}`

let user = await cache.get(cacheKey)

if (user) {

return user

}

user = await db.users.findById(id)

if (user) {

await cache.set(cacheKey, user, 3600)

}

return user

}

async function updateUser(id, updates) {

const user = await db.users.update(id, updates)

await cache.delete(`user:${id}`)

await cache.invalidatePattern('users:*')

return user

}

Cache Warming

Pre-populate cache on startup:

async function warmCache() {

console.log('Warming cache...')

const popularPosts = await db.posts.findPopular(100)

for (const post of popularPosts) {

await cache.set(`post:${post.id}`, post, 3600)

}

console.log('Cache warmed')

}

app.listen(3000, async () => {

await warmCache()

console.log('Server running on port 3000')

})

Best Practice Note

This is the same caching architecture we recommend for CoreUI enterprise applications. Use in-memory caching for single-server deployments and Redis for distributed systems. Always implement cache invalidation strategies to prevent stale data, and monitor cache hit rates to optimize TTL values.

Related Articles

For distributed caching, you might also want to learn how to cache responses with Redis in Node.js for advanced Redis patterns.