How to implement rate limiting in Node.js

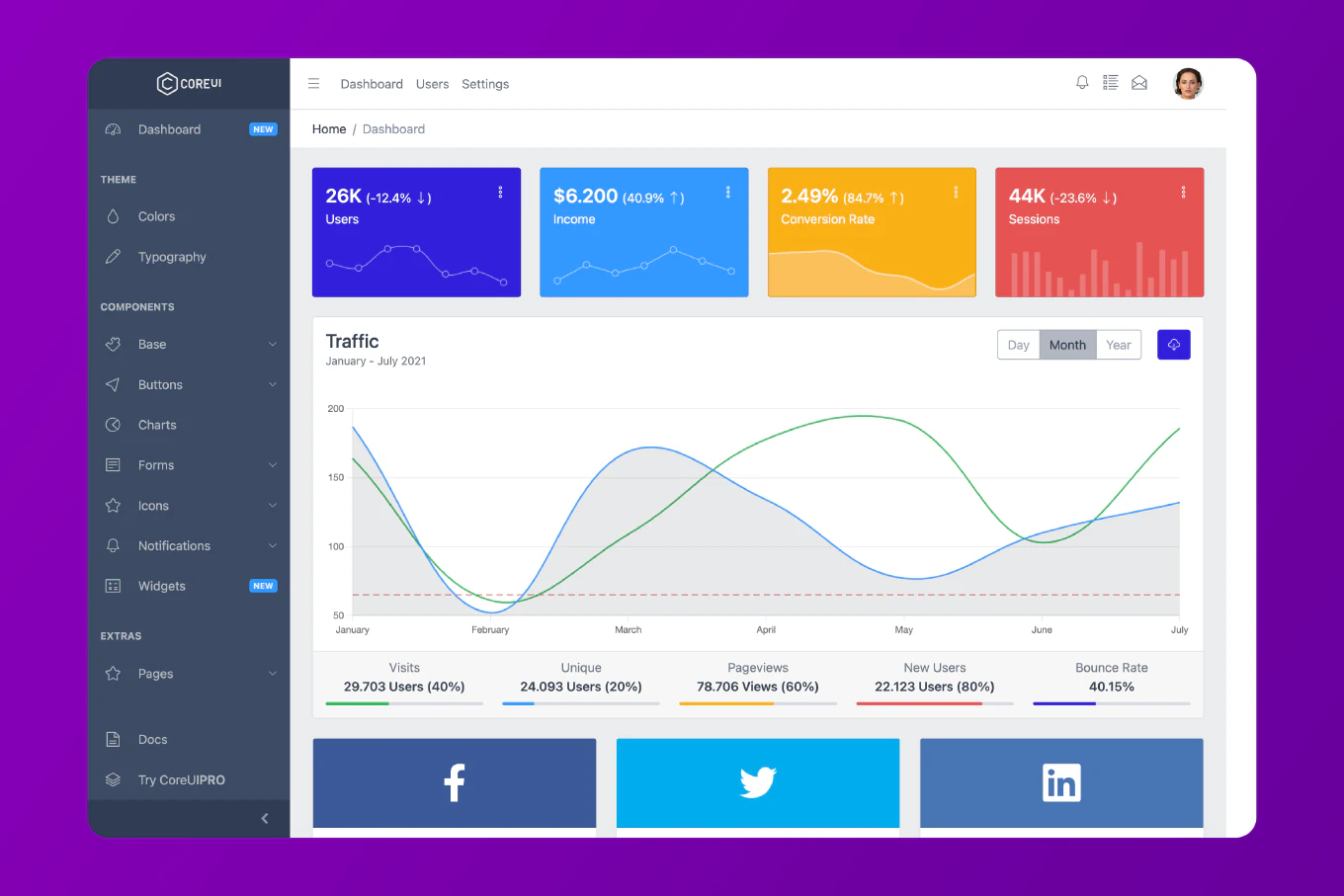

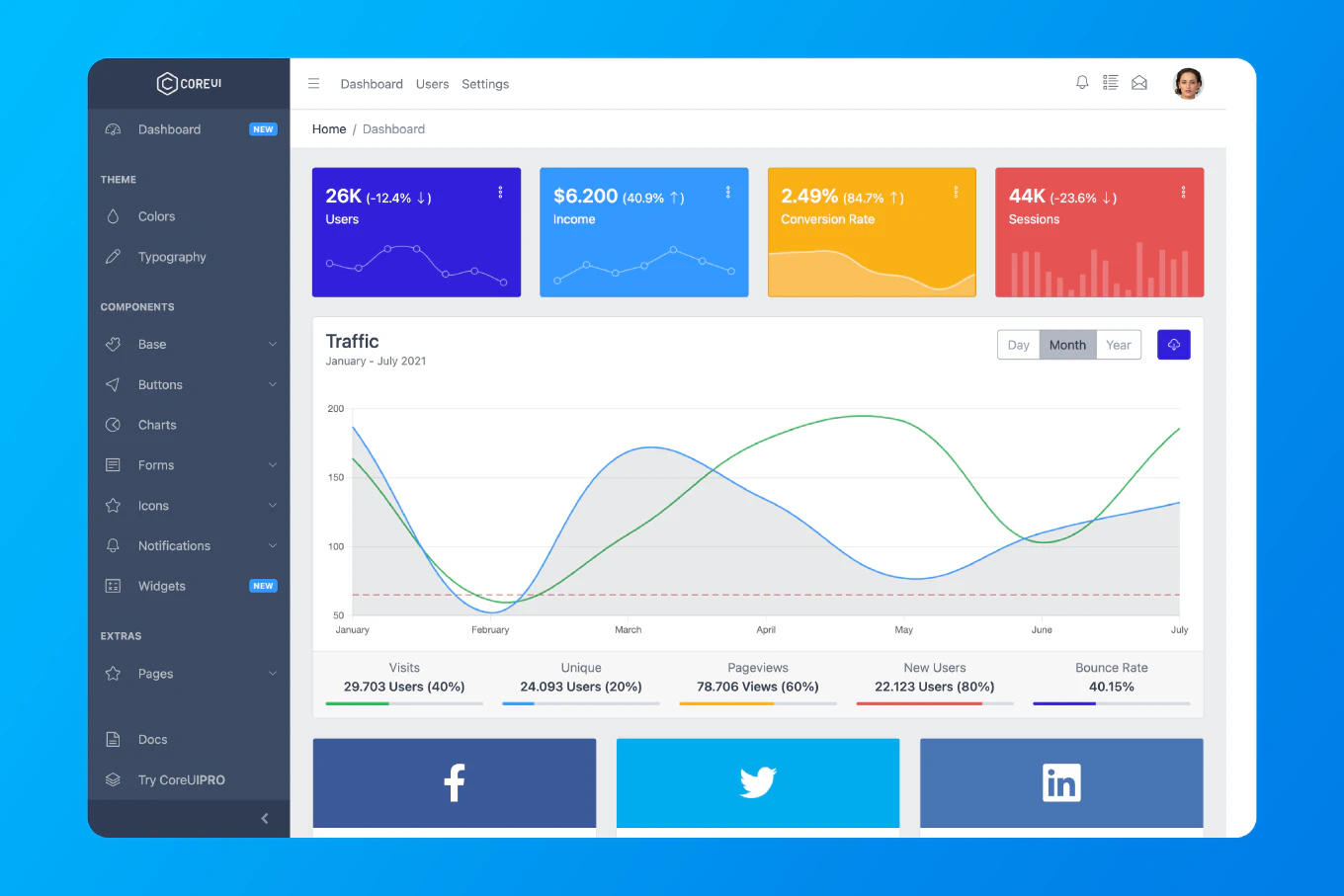

Rate limiting protects your Node.js API from abuse by restricting the number of requests a client can make in a time window. As the creator of CoreUI with 12 years of Node.js backend experience, I’ve implemented rate limiting strategies that protect production APIs serving millions of requests daily from DDoS attacks and resource exhaustion.

The most effective approach uses express-rate-limit with Redis for distributed rate limiting across multiple servers.

Install Dependencies

npm install express-rate-limit redis

Basic Rate Limiting

Create src/middleware/rateLimiter.js:

const rateLimit = require('express-rate-limit')

const limiter = rateLimit({

windowMs: 15 * 60 * 1000, // 15 minutes

max: 100, // Limit each IP to 100 requests per windowMs

message: 'Too many requests from this IP, please try again later',

standardHeaders: true, // Return rate limit info in `RateLimit-*` headers

legacyHeaders: false // Disable `X-RateLimit-*` headers

})

module.exports = limiter

Use with Express

const express = require('express')

const limiter = require('./middleware/rateLimiter')

const app = express()

// Apply to all requests

app.use(limiter)

app.get('/api/data', (req, res) => {

res.json({ message: 'Success' })

})

app.listen(3000)

Route-Specific Rate Limits

const express = require('express')

const rateLimit = require('express-rate-limit')

const app = express()

// Strict limit for authentication endpoints

const authLimiter = rateLimit({

windowMs: 15 * 60 * 1000,

max: 5,

message: 'Too many login attempts, please try again after 15 minutes'

})

// Relaxed limit for general API

const apiLimiter = rateLimit({

windowMs: 15 * 60 * 1000,

max: 100

})

// Apply different limits to different routes

app.post('/api/login', authLimiter, (req, res) => {

// Login logic

})

app.post('/api/register', authLimiter, (req, res) => {

// Registration logic

})

app.get('/api/data', apiLimiter, (req, res) => {

// Data endpoint

})

Redis Store for Distributed Rate Limiting

Create src/middleware/distributedRateLimiter.js:

const rateLimit = require('express-rate-limit')

const RedisStore = require('rate-limit-redis')

const redis = require('redis')

const redisClient = redis.createClient({

host: process.env.REDIS_HOST || 'localhost',

port: process.env.REDIS_PORT || 6379,

password: process.env.REDIS_PASSWORD

})

redisClient.on('error', (err) => {

console.error('Redis error:', err)

})

redisClient.connect()

const limiter = rateLimit({

store: new RedisStore({

client: redisClient,

prefix: 'rate_limit:'

}),

windowMs: 15 * 60 * 1000,

max: 100,

standardHeaders: true,

legacyHeaders: false

})

module.exports = limiter

Custom Key Generator

Rate limit by user ID instead of IP:

const userLimiter = rateLimit({

windowMs: 15 * 60 * 1000,

max: 100,

keyGenerator: (req) => {

// Use user ID from authentication token

return req.user?.id || req.ip

},

skip: (req) => {

// Skip rate limiting for admin users

return req.user?.role === 'admin'

}

})

Custom Error Handler

const limiter = rateLimit({

windowMs: 15 * 60 * 1000,

max: 100,

handler: (req, res) => {

res.status(429).json({

error: 'Too Many Requests',

message: 'You have exceeded the rate limit. Please try again later.',

retryAfter: req.rateLimit.resetTime

})

}

})

Tiered Rate Limiting

Different limits for different user tiers:

const createTieredLimiter = () => {

const limits = {

free: { windowMs: 15 * 60 * 1000, max: 100 },

pro: { windowMs: 15 * 60 * 1000, max: 1000 },

enterprise: { windowMs: 15 * 60 * 1000, max: 10000 }

}

return rateLimit({

windowMs: 15 * 60 * 1000,

max: (req) => {

const tier = req.user?.tier || 'free'

return limits[tier].max

},

keyGenerator: (req) => {

return req.user?.id || req.ip

}

})

}

const tieredLimiter = createTieredLimiter()

app.use('/api', tieredLimiter)

Custom Rate Limiter Implementation

const redis = require('redis')

class RateLimiter {

constructor(redisClient) {

this.client = redisClient

}

async isAllowed(key, limit, window) {

const now = Date.now()

const windowStart = now - window

const multi = this.client.multi()

// Remove old entries

multi.zRemRangeByScore(key, 0, windowStart)

// Count current entries

multi.zCard(key)

// Add current request

multi.zAdd(key, { score: now, value: `${now}` })

// Set expiry

multi.expire(key, Math.ceil(window / 1000))

const results = await multi.exec()

const count = results[1]

return {

allowed: count < limit,

current: count + 1,

limit,

remaining: Math.max(0, limit - count - 1),

resetTime: now + window

}

}

middleware(options = {}) {

const {

windowMs = 15 * 60 * 1000,

max = 100,

keyGenerator = (req) => req.ip

} = options

return async (req, res, next) => {

const key = `rate_limit:${keyGenerator(req)}`

try {

const result = await this.isAllowed(key, max, windowMs)

res.set({

'RateLimit-Limit': result.limit,

'RateLimit-Remaining': result.remaining,

'RateLimit-Reset': new Date(result.resetTime).toISOString()

})

if (!result.allowed) {

return res.status(429).json({

error: 'Too Many Requests',

retryAfter: Math.ceil((result.resetTime - Date.now()) / 1000)

})

}

next()

} catch (error) {

console.error('Rate limit error:', error)

next() // Fail open

}

}

}

}

module.exports = RateLimiter

Usage of Custom Rate Limiter

const express = require('express')

const redis = require('redis')

const RateLimiter = require('./middleware/RateLimiter')

const app = express()

const redisClient = redis.createClient()

await redisClient.connect()

const rateLimiter = new RateLimiter(redisClient)

app.use(rateLimiter.middleware({

windowMs: 15 * 60 * 1000,

max: 100,

keyGenerator: (req) => req.user?.id || req.ip

}))

app.get('/api/data', (req, res) => {

res.json({ message: 'Success' })

})

Rate Limit by Endpoint

const endpointLimits = {

'/api/search': { windowMs: 60 * 1000, max: 10 },

'/api/upload': { windowMs: 60 * 60 * 1000, max: 5 },

'/api/data': { windowMs: 15 * 60 * 1000, max: 100 }

}

const dynamicLimiter = (req, res, next) => {

const limits = endpointLimits[req.path] || { windowMs: 15 * 60 * 1000, max: 100 }

const limiter = rateLimit({

windowMs: limits.windowMs,

max: limits.max,

keyGenerator: (req) => `${req.ip}:${req.path}`

})

limiter(req, res, next)

}

app.use('/api', dynamicLimiter)

Rate Limit Headers

app.use((req, res, next) => {

// Add rate limit info to response

res.on('finish', () => {

if (req.rateLimit) {

console.log({

ip: req.ip,

path: req.path,

limit: req.rateLimit.limit,

current: req.rateLimit.current,

remaining: req.rateLimit.remaining,

resetTime: new Date(req.rateLimit.resetTime)

})

}

})

next()

})

Sliding Window Rate Limiter

class SlidingWindowLimiter {

constructor(redisClient) {

this.client = redisClient

}

async check(key, limit, windowMs) {

const now = Date.now()

const windowStart = now - windowMs

const multi = this.client.multi()

multi.zRemRangeByScore(key, 0, windowStart)

multi.zAdd(key, { score: now, value: `${now}-${Math.random()}` })

multi.zCard(key)

multi.expire(key, Math.ceil(windowMs / 1000))

const results = await multi.exec()

const count = results[2]

return {

allowed: count <= limit,

count,

limit,

remaining: Math.max(0, limit - count)

}

}

}

Best Practice Note

This is the same rate limiting architecture we use in CoreUI enterprise applications. Rate limiting protects your API from abuse while ensuring fair resource allocation across users. Always use Redis-backed rate limiting in production for consistency across multiple server instances, and implement tiered limits based on user subscription levels.

Related Articles

For complete API security, you might also want to learn how to implement authentication with JWT in Node.js and how to secure Express API.