How to use fetch in Node.js

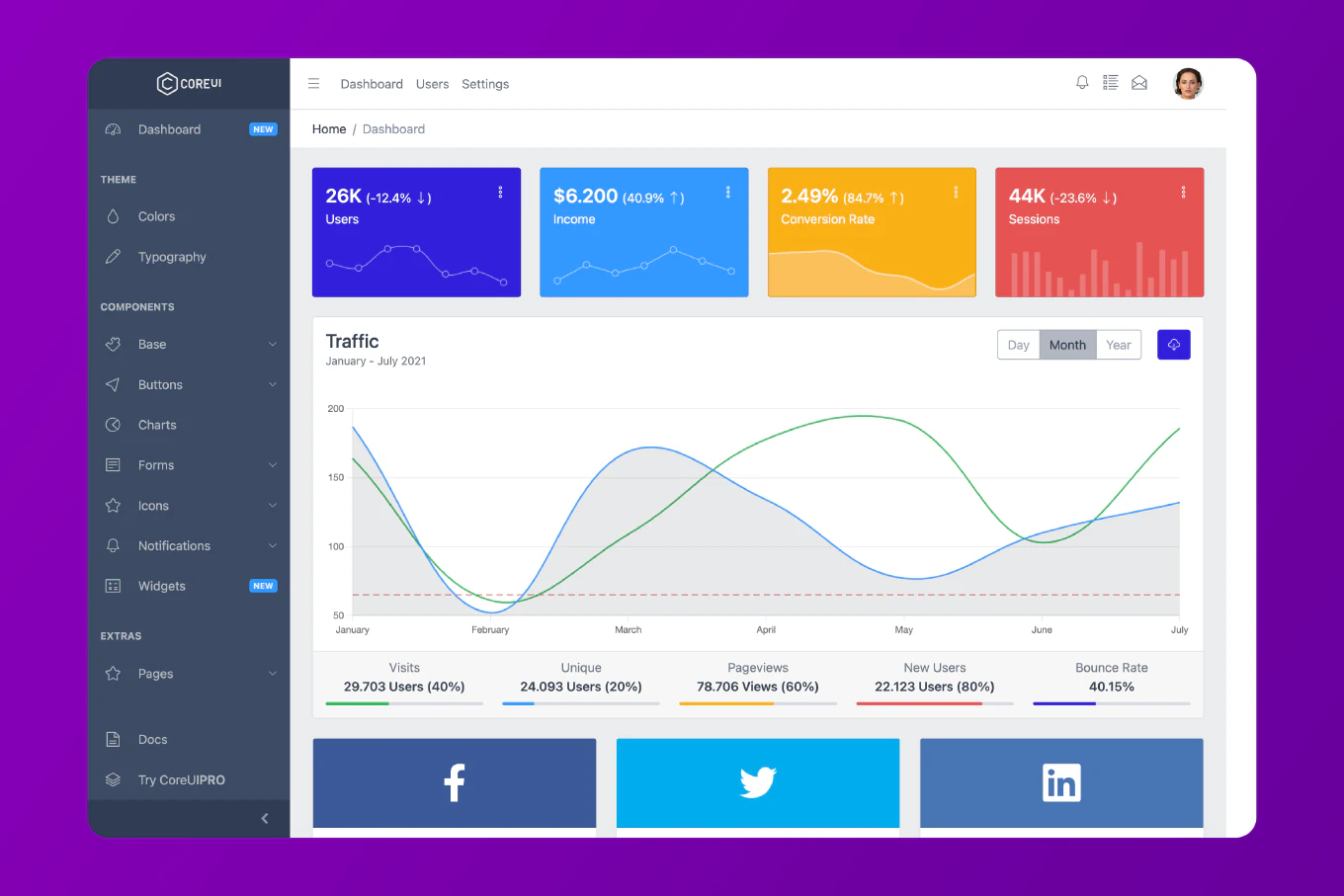

Making HTTP requests in Node.js has traditionally required third-party libraries, but starting with Node.js 18, the native fetch API is available without any dependencies. With over 10 years of experience building Node.js applications since 2014 and as the creator of CoreUI, a widely used open-source UI library, I’ve implemented countless API integrations in production environments. The most modern and efficient approach is to use the native fetch API, which brings the same familiar browser API to the server side. This method eliminates external dependencies while providing a clean, promise-based interface for HTTP requests.

Use the native fetch API in Node.js 18+ for making HTTP requests without any external dependencies.

const response = await fetch('https://api.example.com/data')

const data = await response.json()

console.log(data)

This code makes a simple GET request to an API endpoint using the native fetch function. The first await waits for the HTTP response, and the second await parses the JSON response body. The fetch API returns a promise, so you can use async/await syntax for clean, readable code.

Making GET Requests

async function fetchData() {

try {

const response = await fetch('https://api.example.com/users')

if (!response.ok) {

throw new Error(`HTTP error! status: ${response.status}`)

}

const users = await response.json()

console.log('Users:', users)

return users

} catch (error) {

console.error('Fetch error:', error.message)

throw error

}

}

fetchData()

This example shows a complete GET request with proper error handling. The response.ok property checks if the status code is in the 200-299 range. If the request fails, an error is thrown with the status code. The try-catch block handles both network errors and HTTP errors, making your code more robust.

Making POST Requests

async function createUser(userData) {

const response = await fetch('https://api.example.com/users', {

method: 'POST',

headers: {

'Content-Type': 'application/json'

},

body: JSON.stringify(userData)

})

if (!response.ok) {

throw new Error(`HTTP error! status: ${response.status}`)

}

const newUser = await response.json()

return newUser

}

const user = await createUser({

name: 'John Doe',

email: '[email protected]',

age: 30

})

console.log('Created user:', user)

For POST requests, you need to specify the HTTP method, set appropriate headers, and include the request body. The Content-Type header tells the server that you are sending JSON data. The body property must be a string, so you use JSON.stringify() to convert the JavaScript object. The server typically responds with the created resource, which you parse using response.json().

Sending Custom Headers

async function fetchWithAuth() {

const response = await fetch('https://api.example.com/protected', {

method: 'GET',

headers: {

'Authorization': 'Bearer eyJhbGciOiJIUzI1NiIsInR5cCI6IkpXVCJ9...',

'Content-Type': 'application/json',

'Accept': 'application/json',

'User-Agent': 'NodeJS Fetch Client',

'X-Custom-Header': 'custom-value'

}

})

const data = await response.json()

return data

}

const protectedData = await fetchWithAuth()

console.log('Protected data:', protectedData)

Custom headers are essential for authentication, content negotiation, and API-specific requirements. The Authorization header typically contains a bearer token for API authentication. The Accept header tells the server what response format you prefer, while the User-Agent identifies your client application. You can add any custom headers your API requires using the headers object.

Handling Different Response Types

async function handleResponses() {

// JSON response

const jsonResponse = await fetch('https://api.example.com/users')

const jsonData = await jsonResponse.json()

// Text response

const textResponse = await fetch('https://api.example.com/text')

const textData = await textResponse.text()

// Blob response (for binary data)

const imageResponse = await fetch('https://api.example.com/image')

const imageBlob = await imageResponse.blob()

// ArrayBuffer response

const bufferResponse = await fetch('https://api.example.com/binary')

const bufferData = await bufferResponse.arrayBuffer()

console.log('JSON:', jsonData)

console.log('Text:', textData)

console.log('Image size:', imageBlob.size)

console.log('Buffer length:', bufferData.byteLength)

}

handleResponses()

The fetch API provides multiple methods to parse response bodies based on the content type. Use json() for JSON data, text() for plain text or HTML, blob() for binary data like images or files, and arrayBuffer() for raw binary data. Each method returns a promise, so you need to await it before using the data.

Implementing Timeouts

async function fetchWithTimeout(url, timeout = 5000) {

const controller = new AbortController()

const timeoutId = setTimeout(() => controller.abort(), timeout)

try {

const response = await fetch(url, {

signal: controller.signal

})

clearTimeout(timeoutId)

if (!response.ok) {

throw new Error(`HTTP error! status: ${response.status}`)

}

return await response.json()

} catch (error) {

if (error.name === 'AbortError') {

throw new Error('Request timeout')

}

throw error

}

}

const data = await fetchWithTimeout('https://api.example.com/data', 3000)

console.log(data)

Native fetch does not have built-in timeout support, but you can implement it using AbortController. The controller’s abort() method is called after the timeout period, which cancels the fetch request. The signal is passed to fetch, and when aborted, it throws an AbortError. Always clear the timeout after a successful response to prevent memory leaks.

Making Multiple Requests

async function fetchMultipleEndpoints() {

try {

const [users, posts, comments] = await Promise.all([

fetch('https://api.example.com/users').then(r => r.json()),

fetch('https://api.example.com/posts').then(r => r.json()),

fetch('https://api.example.com/comments').then(r => r.json())

])

return { users, posts, comments }

} catch (error) {

console.error('Error fetching data:', error)

throw error

}

}

const allData = await fetchMultipleEndpoints()

console.log('Users:', allData.users.length)

console.log('Posts:', allData.posts.length)

console.log('Comments:', allData.comments.length)

When you need to fetch data from multiple endpoints, use Promise.all() to make the requests in parallel. This significantly improves performance compared to sequential requests. Each fetch is chained with .then(r => r.json()) to parse the response immediately. If any request fails, the entire operation fails, and the catch block handles the error.

Handling Query Parameters

function buildUrl(baseUrl, params) {

const url = new URL(baseUrl)

Object.keys(params).forEach(key => {

if (params[key] !== undefined && params[key] !== null) {

url.searchParams.append(key, params[key])

}

})

return url.toString()

}

async function fetchWithParams() {

const url = buildUrl('https://api.example.com/users', {

page: 1,

limit: 10,

sort: 'name',

filter: 'active'

})

const response = await fetch(url)

const data = await response.json()

return data

}

const users = await fetchWithParams()

console.log('Fetched users:', users)

Query parameters are commonly used for pagination, filtering, and sorting. The URL object provides a clean way to build URLs with query parameters using the searchParams API. This helper function filters out undefined and null values to avoid sending empty parameters. The resulting URL includes all parameters properly encoded, making it safe to use with any API.

Implementing Retry Logic

async function fetchWithRetry(url, options = {}, maxRetries = 3) {

for (let i = 0; i < maxRetries; i++) {

try {

const response = await fetch(url, options)

if (!response.ok && response.status >= 500) {

throw new Error(`Server error: ${response.status}`)

}

return response

} catch (error) {

const isLastAttempt = i === maxRetries - 1

if (isLastAttempt) {

throw error

}

const delay = Math.min(1000 * Math.pow(2, i), 10000)

console.log(`Retry attempt ${i + 1} after ${delay}ms`)

await new Promise(resolve => setTimeout(resolve, delay))

}

}

}

async function fetchDataWithRetry() {

try {

const response = await fetchWithRetry('https://api.example.com/data')

const data = await response.json()

return data

} catch (error) {

console.error('Failed after retries:', error.message)

throw error

}

}

const data = await fetchDataWithRetry()

console.log('Data:', data)

Retry logic improves reliability when dealing with flaky networks or temporarily unavailable servers. This implementation uses exponential backoff, where the delay between retries increases with each attempt up to a maximum of 10 seconds. Only server errors (5xx status codes) and network errors trigger retries, as client errors (4xx) indicate problems with the request itself. The function returns the successful response or throws an error after all retries are exhausted.

Streaming Response Data

const fs = require('fs')

const { pipeline } = require('stream/promises')

async function downloadFile(url, destinationPath) {

const response = await fetch(url)

if (!response.ok) {

throw new Error(`HTTP error! status: ${response.status}`)

}

const fileStream = fs.createWriteStream(destinationPath)

await pipeline(

response.body,

fileStream

)

console.log('File downloaded successfully')

}

downloadFile('https://example.com/large-file.zip', './download.zip')

For large files, streaming the response prevents loading the entire file into memory. The response.body is a readable stream that can be piped directly to a writable stream like a file. The pipeline() function from the streams module handles the piping with proper error handling and cleanup. This approach is memory-efficient and works well for downloading large files or processing streaming data.

Best Practice Note

For production Node.js applications that require advanced features like request retries, interceptors, or automatic JSON parsing, consider using axios instead of native fetch. You can learn more about how to use axios in Node.js for a more feature-rich HTTP client. However, native fetch is perfect for simple use cases where you want to minimize dependencies. This is the same approach we use in CoreUI backend services for making lightweight API calls without external dependencies.