How to implement rate limiting in JavaScript

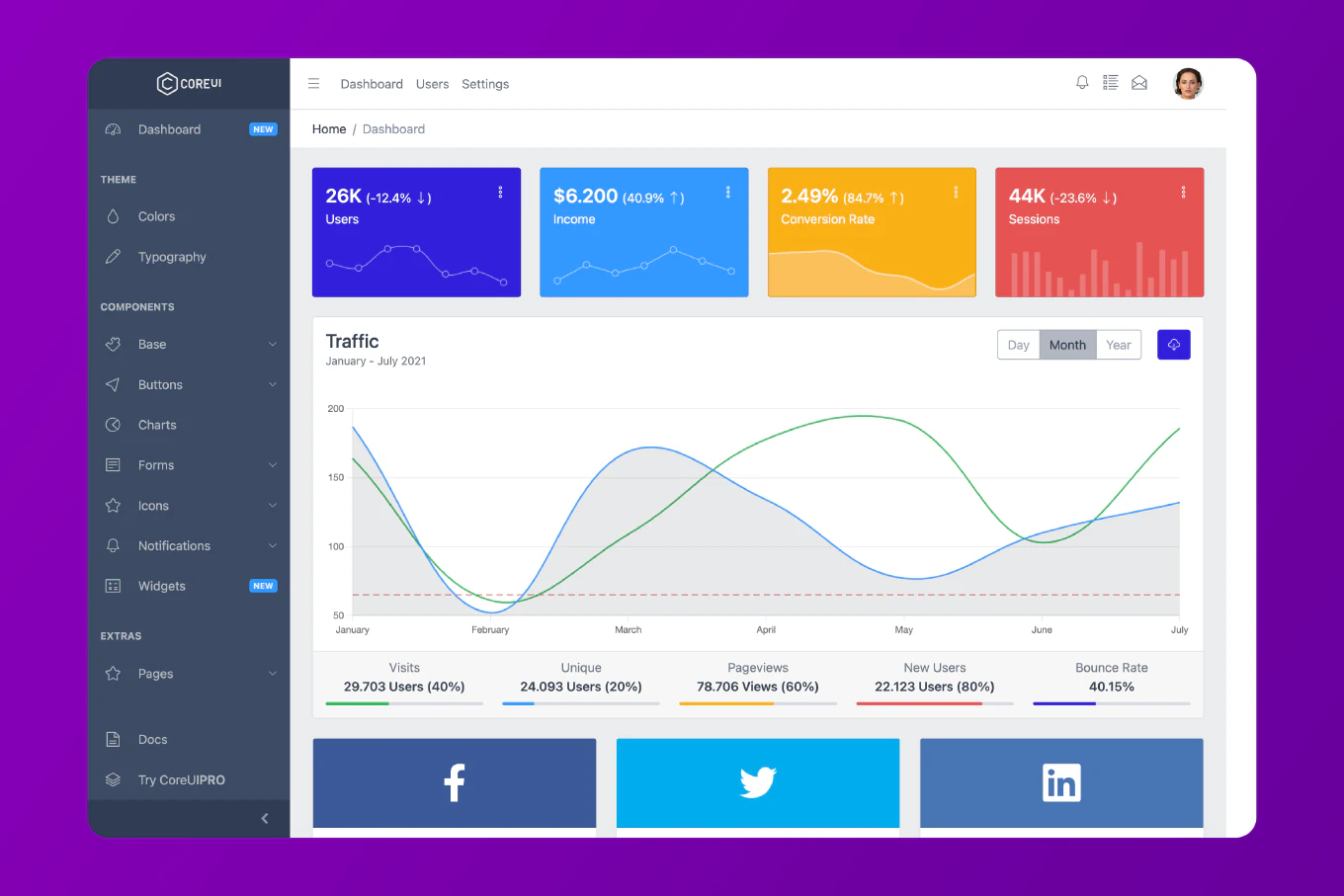

Rate limiting is crucial for protecting APIs from abuse, preventing denial-of-service attacks, and ensuring fair resource usage among users. With over 25 years of experience in software development and as the creator of CoreUI, a widely used open-source UI library, I’ve implemented rate limiting in countless production applications. The most effective and flexible approach is to use the token bucket algorithm, which allows burst traffic while maintaining average rate limits. This method provides smooth rate limiting behavior and is easy to implement both on the client and server side.

Use the token bucket algorithm to implement rate limiting by allowing a fixed number of requests per time window with support for burst traffic.

class RateLimiter {

constructor(maxTokens, refillRate) {

this.maxTokens = maxTokens

this.tokens = maxTokens

this.refillRate = refillRate

this.lastRefill = Date.now()

}

tryConsume() {

this.refill()

if (this.tokens > 0) {

this.tokens--

return true

}

return false

}

refill() {

const now = Date.now()

const elapsed = now - this.lastRefill

const tokensToAdd = (elapsed / 1000) * this.refillRate

this.tokens = Math.min(this.maxTokens, this.tokens + tokensToAdd)

this.lastRefill = now

}

}

const limiter = new RateLimiter(10, 2)

This token bucket implementation maintains a count of available tokens that decreases with each request and refills over time. The maxTokens parameter sets the bucket capacity, while refillRate determines how many tokens are added per second. The tryConsume() method checks if a token is available, consumes it if possible, and returns a boolean indicating success. The refill() method adds tokens based on elapsed time since the last refill, ensuring smooth rate limiting without hard time windows.

Creating an Express Middleware Rate Limiter

const rateLimiters = new Map()

function createRateLimiter(options = {}) {

const maxRequests = options.maxRequests || 100

const windowMs = options.windowMs || 60000

const message = options.message || 'Too many requests'

return (req, res, next) => {

const key = req.ip || req.connection.remoteAddress

if (!rateLimiters.has(key)) {

rateLimiters.set(key, {

count: 0,

resetTime: Date.now() + windowMs

})

}

const limiter = rateLimiters.get(key)

if (Date.now() > limiter.resetTime) {

limiter.count = 0

limiter.resetTime = Date.now() + windowMs

}

limiter.count++

if (limiter.count > maxRequests) {

return res.status(429).json({ error: message })

}

res.setHeader('X-RateLimit-Limit', maxRequests)

res.setHeader('X-RateLimit-Remaining', maxRequests - limiter.count)

res.setHeader('X-RateLimit-Reset', new Date(limiter.resetTime).toISOString())

next()

}

}

app.use(createRateLimiter({ maxRequests: 100, windowMs: 60000 }))

This Express middleware implements rate limiting using a fixed window counter. Each client IP address gets its own counter that tracks the number of requests within a time window. When the window expires, the counter resets. The middleware adds standard rate limit headers to responses, informing clients about their remaining quota. If the limit is exceeded, it returns a 429 status code with an error message.

Implementing Sliding Window Rate Limiting

class SlidingWindowRateLimiter {

constructor(maxRequests, windowMs) {

this.maxRequests = maxRequests

this.windowMs = windowMs

this.requests = new Map()

}

isAllowed(key) {

const now = Date.now()

const windowStart = now - this.windowMs

if (!this.requests.has(key)) {

this.requests.set(key, [])

}

const timestamps = this.requests.get(key)

const validTimestamps = timestamps.filter(ts => ts > windowStart)

this.requests.set(key, validTimestamps)

if (validTimestamps.length < this.maxRequests) {

validTimestamps.push(now)

return true

}

return false

}

getRemainingRequests(key) {

const now = Date.now()

const windowStart = now - this.windowMs

const timestamps = this.requests.get(key) || []

const validTimestamps = timestamps.filter(ts => ts > windowStart)

return Math.max(0, this.maxRequests - validTimestamps.length)

}

}

const limiter = new SlidingWindowRateLimiter(10, 60000)

The sliding window algorithm provides more accurate rate limiting than fixed windows by tracking individual request timestamps. Each request is recorded with its timestamp, and old requests outside the current window are removed. This approach prevents the burst problem where a client can make double the allowed requests by timing them at window boundaries. The getRemainingRequests() method calculates how many requests are still allowed within the current window.

Client-Side Request Throttling

class RequestThrottler {

constructor(maxConcurrent, minDelay) {

this.maxConcurrent = maxConcurrent

this.minDelay = minDelay

this.queue = []

this.activeRequests = 0

this.lastRequestTime = 0

}

async throttle(requestFn) {

return new Promise((resolve, reject) => {

this.queue.push({ requestFn, resolve, reject })

this.processQueue()

})

}

async processQueue() {

if (this.queue.length === 0 || this.activeRequests >= this.maxConcurrent) {

return

}

const now = Date.now()

const timeSinceLastRequest = now - this.lastRequestTime

if (timeSinceLastRequest < this.minDelay) {

setTimeout(() => this.processQueue(), this.minDelay - timeSinceLastRequest)

return

}

const { requestFn, resolve, reject } = this.queue.shift()

this.activeRequests++

this.lastRequestTime = Date.now()

try {

const result = await requestFn()

resolve(result)

} catch (error) {

reject(error)

} finally {

this.activeRequests--

this.processQueue()

}

}

}

const throttler = new RequestThrottler(3, 1000)

const result = await throttler.throttle(() => fetch('/api/data'))

Client-side throttling prevents overwhelming the server by limiting concurrent requests and enforcing minimum delays between requests. This implementation uses a queue to manage pending requests and processes them based on concurrency limits and timing constraints. The throttle() method wraps any async function and ensures it executes according to the specified limits. This approach is particularly useful when making multiple API calls from the browser.

Implementing Time-Based Decay Rate Limiting

class DecayRateLimiter {

constructor(maxScore, decayRate) {

this.maxScore = maxScore

this.decayRate = decayRate

this.scores = new Map()

}

isAllowed(key, cost = 1) {

const now = Date.now()

if (!this.scores.has(key)) {

this.scores.set(key, { score: 0, lastUpdate: now })

}

const data = this.scores.get(key)

const elapsed = (now - data.lastUpdate) / 1000

const decayAmount = elapsed * this.decayRate

data.score = Math.max(0, data.score - decayAmount)

data.lastUpdate = now

if (data.score + cost <= this.maxScore) {

data.score += cost

return true

}

return false

}

getScore(key) {

const data = this.scores.get(key)

if (!data) return 0

const elapsed = (Date.now() - data.lastUpdate) / 1000

const decayAmount = elapsed * this.decayRate

return Math.max(0, data.score - decayAmount)

}

}

const limiter = new DecayRateLimiter(100, 10)

Decay-based rate limiting assigns a score to each request that gradually decreases over time. This approach is more forgiving than hard limits, as the score naturally reduces without waiting for window resets. Each request adds to the score, and the score decays at a constant rate. This method works well for APIs where some requests are more expensive than others, as you can assign different costs to different operations using the cost parameter.

Creating a Distributed Rate Limiter with Redis

const Redis = require('redis')

const client = Redis.createClient()

async function rateLimitWithRedis(key, maxRequests, windowMs) {

const current = await client.get(key)

if (current === null) {

await client.set(key, 1, { PX: windowMs })

return { allowed: true, remaining: maxRequests - 1 }

}

const count = parseInt(current)

if (count >= maxRequests) {

const ttl = await client.pTTL(key)

return { allowed: false, remaining: 0, resetIn: ttl }

}

await client.incr(key)

return { allowed: true, remaining: maxRequests - count - 1 }

}

const result = await rateLimitWithRedis('user:123', 100, 60000)

console.log('Allowed:', result.allowed)

console.log('Remaining:', result.remaining)

For distributed applications running on multiple servers, store rate limit data in Redis to ensure consistent enforcement across all instances. Each key represents a unique client or user, and Redis handles incrementing counters atomically. The key expires automatically after the time window, resetting the counter. This approach scales horizontally and provides accurate rate limiting even with multiple application servers.

Implementing IP-Based Rate Limiting

function createIPRateLimiter(maxRequests = 100, windowMs = 60000) {

const ipLimiters = new Map()

return (req, res, next) => {

const ip = req.ip || req.connection.remoteAddress || req.socket.remoteAddress

if (!ipLimiters.has(ip)) {

ipLimiters.set(ip, {

requests: [],

blocked: false,

blockedUntil: 0

})

}

const limiter = ipLimiters.get(ip)

const now = Date.now()

if (limiter.blocked && now < limiter.blockedUntil) {

const remainingTime = Math.ceil((limiter.blockedUntil - now) / 1000)

return res.status(429).json({

error: 'Too many requests',

retryAfter: remainingTime

})

}

limiter.blocked = false

limiter.requests = limiter.requests.filter(time => time > now - windowMs)

limiter.requests.push(now)

if (limiter.requests.length > maxRequests) {

limiter.blocked = true

limiter.blockedUntil = now + windowMs

return res.status(429).json({

error: 'Rate limit exceeded',

retryAfter: Math.ceil(windowMs / 1000)

})

}

res.setHeader('X-RateLimit-Limit', maxRequests)

res.setHeader('X-RateLimit-Remaining', maxRequests - limiter.requests.length)

next()

}

}

app.use(createIPRateLimiter(50, 60000))

IP-based rate limiting tracks requests per client IP address, preventing individual users from overwhelming your server. This implementation includes a temporary block mechanism that prevents further requests after exceeding the limit until the time window expires. The retryAfter value in the response tells clients how long to wait before retrying. The rate limit headers inform clients about their current usage and remaining quota, enabling them to self-regulate their request rate.

Best Practice Note

Rate limiting should be implemented at multiple levels for robust protection. Use server-side rate limiting to enforce hard limits and prevent abuse, and implement client-side throttling to provide a better user experience by preventing unnecessary requests. For more advanced control over function execution timing, explore how to debounce a function in JavaScript and how to throttle a function in JavaScript. This layered approach is the same strategy we use in CoreUI applications to ensure optimal performance and security while maintaining a smooth user experience.